You know it is important to test your machine learning model with data it has never seen before. I discussed this in these posts, An Optimization Challenge: Training VS Testing, and Why Our ML Models Must Be Tested With Unseen Data.

So, the question now is, "How should I split the full dataset into a TRAIN and TEST dataset?"

The question seems so simple... but it's not. How the split is performed can make all the difference towards achieving a valid and useful model score.

In this post, I present a very straightforward strategy to split the full dataset.

However, as I will demonstrate, using this strategy can result in model scoring results being good, just fine or poor. In other words, the results are worthless.

But why? Why should we care? That's what this post is all about.

The Back Story

Many years ago, I distinctly remember standing in front of a group of people presenting my predictive analysis findings. The findings were based on a regression analysis model.

Someone asked the question I will never forget, "How did you validate your model?" At that point in my career, I had never been asked or even exposed to this question and what it implies.

Every book I had read was focused on the math, transformation and what the analysis meant. Not once was there a mention of checking the model using unseen data.

In all fairness, looking back at the books I was referencing, they were not about looking into the future. They were focused on what the existing sample set was "telling me."

But my work had always been focused on, what will likely occur in the future. Clearly I had much to learn.

I quickly learned the need to validate or test my model with unseen data.

But how should the data be split?

Top Down Split: Thinking About It

When I understood the need to split my full dataset into a TRAIN and TEST dataset, my first strategy was a simple top to bottom approach.

Here how it works. Suppose I want a 70/30 split. First, get your full dataset. The top 70% is now your TRAIN dataset and the remaining 30% is your TEST dataset.

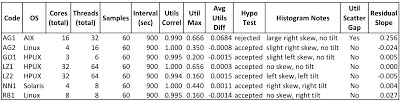

Here is a very simple example. Below is the full dataset. (I know it stupid small, but it makes my point.)

Now I will split the data using the simple top down approach. The image below is split 70/30 top to bottom. The green-ish data will be my TRAIN dataset and the yellow-ish data will be my TEST data.

Do you for see any problems with this approach?

Before I discuss the potential problems, let's look how this can be implemented using Python.

Top Down Split: Using Python

Here is how to do the split using Python.

If you want to run this yourself using your own Python Machine Learning Sandbox you can download the entire Jupyter Notebook HERE.

The code below assumed the full dataset consists of 1259 rows. There are three "features" stored in the X array. There is one label (either green, yellow or red) stored in the y array.

# Model is testing with data it has never seen before... good

# But the split is likely not realistic. Think: Date/time ordered data

# The results could be better or worse. Regardless, invalid test.

print("Top Down Percentage Split Dataset Train And Test")

print()

test_size = 0.20

split_row = int(X.shape[0]*(1-test_size))

X_train = X[0:split_row,]

X_test = X[split_row:,]

y_train = y[0:split_row,]

y_test = y[split_row:,]

ScoreAndStats(X, y, X_train, y_train, X_test, y_test)

When the notebook code is run, the key results are:

Top Down Percentage Split Dataset Train And Test

Shapes X(r,c) y(r,c)

Full (1259, 3) (1259,)

Train (1007, 3) (1007,)

Test (252, 3) (252,)

The above output was created with the below code:

print("Shapes X(r,c) y(r,c)\n")

print("Full ", X.shape, y.shape)

print("Train ", X_train.shape, y_train.shape)

print("Test ", X_test.shape, y_test.shape)

When the model is trained and then tested, the TEST data accuracy score is 0.65. Not a great score, but that's not the point.

The point is the score is invalid. Why? Read on.

Top Down Split: The Problems

The main problem is our model will be trained with "daytime" data and tested with "evening" data.

But it could get a LOT worse. Consider these very real scenarios:

-

What if the TRAIN data consisted of a mixed database workload and the TEST data consisted of a batch centric workload? The model will be heavily biased toward a mixed database workload. Bad.

-

What if the TRAIN data consisted of an "in country" database workload and the TEST data consisted of an "out of country" workload. The model will be heavily biased toward an "in country" database workload. Bad.

-

What if the TRAIN data consisted of January, February, March and April AWR data and the TEST data consisted only of May AWR data. The model will be heavily biased toward the January to April database workload. Bad.

In each of the above cases. The model is trained and tested using two potentially very different workloads. This means when we score the model using the unseen TEST data, the results could be good, ok or bad.

This means the score results are worthless and could easily mislead us into thinking we have developed a fantastic model.

This is a situation we should never allow ourselves to be in.

Knowing this, we must use a fundamentally different approach to split our data.

Top Down Split: Summary

It is very easy to get into this invalid model scoring situation because, being Oracle professionals, our data is usually stored, extracted and saved in some order and usually it is date/time order.

So we must take steps to ensure we don't get caught in the simple "Top Down Split" trap!

In my next post, I will discussion another strategy I call the, "Random Shuffle Split Strategy."

All the best in your machine learning work,

Craig.