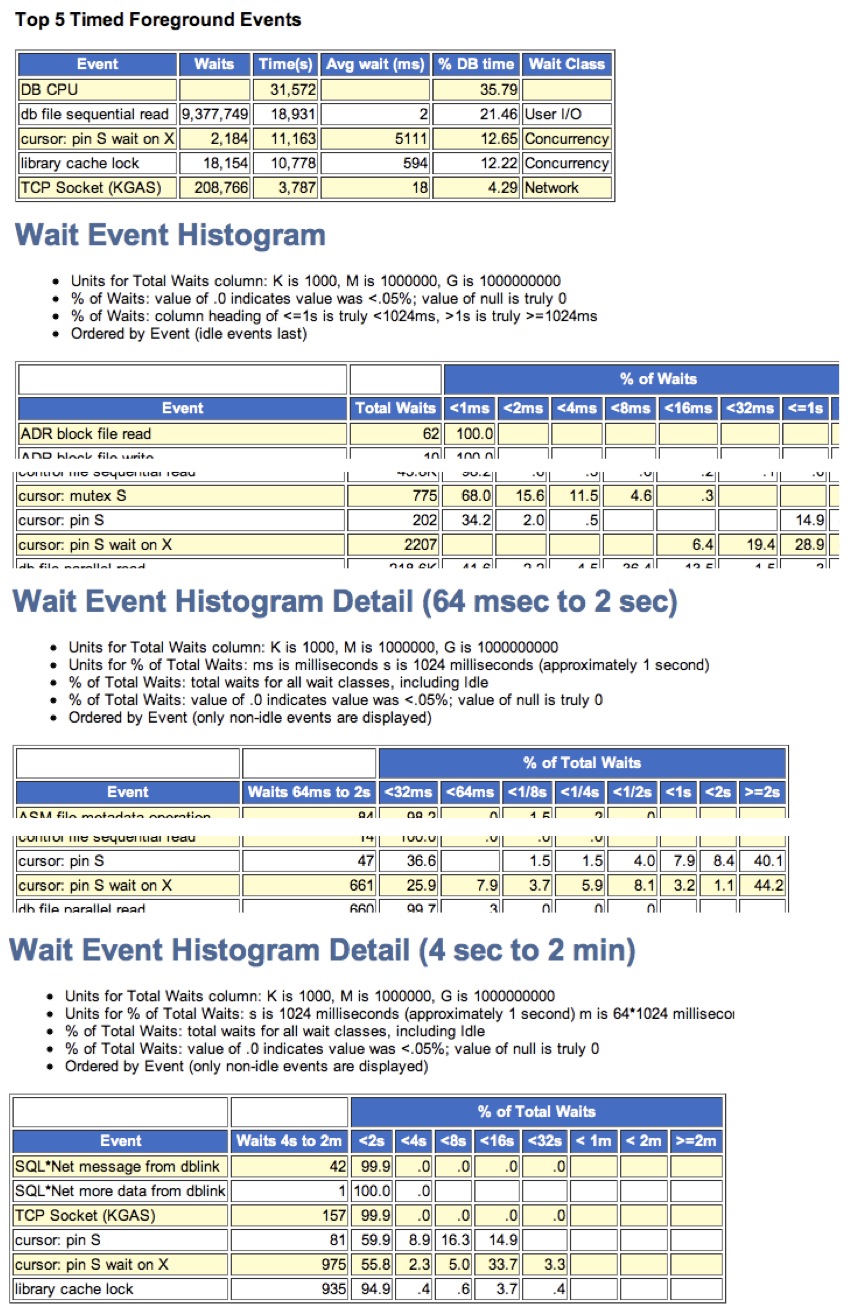

You know the average IO read response time is 2.4ms because you read my last article and then figured it out. (Easy wasn't it?)

But you have a hunch the reads are sometimes slower. Much slower. So, how can you prove this, and if it is true, is it an issue worthy of your time?

How do you prove there are some super slow read IO response times using only Oracle’s performance v$ views? How do you know if it’s truly an issue anyway? That is what this article is all about.

So, here we go!

Don't Panic Over The Average Read Times

Before you get all in a big huff and storm into the IO team's realm, you need more than just the average db file sequential read wait time. Why? Well... watch this video and you will get a sense of what I'm talking about.

As my last article demonstrated, it is easy to get Oracle foreground process high quality average single block synchronous read response times. That's the average wait time for the event, db file sequential read. You probably know this can be found on any AWR, Statspack or self respecting Oracle performance product.

However, even with a fast average read time, there are likely some painfully slow read times as well. But how do we know?

Oracle's Got The Data Baby!

For each wait event, Oracle maintains buckets that contain wait event counts for a given wait time range. When any wait event occurs, the Oracle process increments the appropriate counter.

This details are stored in the view v$event_histogram and also in the AWR table, DBA_HIST_EVENT_HISTOGRAM.

For example, the first bucket is where waits go when the wait time is from 0ms to less than or equal to 1ms. The second bucket goes from greater than 1ms to less than and or equal to 2ms. The third bucket goes from greater than 2ms to less than or equal to 4ms. And on and on.

I am not going to write about creating or interrupting histograms in this article. I have created lots of content about that. There are presentations, blog posts (search for histogram), webinars and video seminars.

What I am going to explain is how you can tell if there are slow read times, how many and if you should confront the IO team about it.

As a side note, the AWR event histogram data is usually included in an AWR report. But it can easily be used incorrectly and the raw figures are very useful in my example.

First, We Need Some Tools

Download the latest version of my OraPub System Monitor (OSM) toolkit HERE.

When you run the menu script, osm.sql, you will notice a number of event histogram tools. There are tools for directly sampling wait times from v$system_event, v$event_histogram and also pulling data from dba_hist_event_histogram.

There is a dedicated "read me" file for all my event histogram tools named, readmeEventHist.txt.

Currently, here is the relevant tool list:

Wow! That's a lot of tools. Sometimes I forget how much work I've done in this area.

Get Some Read Data From AWR

Sometimes I need to get past "more than the average wait time" data. For example, data from yesterday between 0900 and 1000. This is when I will use AWR data from the table dba_hist_event_histogram. To extract the data, I'll use my OSM tool, swEhAwrGet.sql.

Here is a real life example using real production data with the explanation below.

SQL> @swEhAwrGet

DBID INSTANCE_NUMBER FIRST_SNAP_ID BEGIN_INTERVAL_TIME LAST_SNAP_ID BEGIN_INTERVAL_TIME

---------- --------------- ------------- -------------------- ------------ --------------------

9217280430 1 2464 28-Dec-2015 16:00:07 3369 04-Feb-2015 09:00:43

8543270432 1 31089 12-Jul-2015 02:00:10 32532 11-Aug-2015 09:30:13

8543270432 2 31089 12-Jul-2015 02:00:10 32532 11-Aug-2015 09:30:13

8543270432 3 31089 12-Jul-2015 02:00:10 32532 11-Aug-2015 09:30:13

ENTER the database ID (DBID) : 8543270432

ENTER the instance number : 1

ENTER the approximate starting date/hour (DD-Mon-YYYY HH24) : 21-Jul-2015 10

ENTER the approximate ending date/hour (DD-Mon-YYYY HH24) : 21-Jul-2015 11

SNAP_ID TO_CHAR(BEGIN_INTERV TO_CHAR(END_INTERVAL

---------- -------------------- --------------------

31523 21-Jul-2015 09:00:04 21-Jul-2015 09:30:00

31524 21-Jul-2015 09:30:00 21-Jul-2015 10:00:02

31525 21-Jul-2015 10:00:02 21-Jul-2015 10:30:24

31526 21-Jul-2015 10:30:24 21-Jul-2015 11:00:29

31527 21-Jul-2015 11:00:29 21-Jul-2015 11:30:02

ENTER the beginning snap_id : 31525

ENTER the ending snap_id : 31526

ENTER the wait event (default: db%seq%read) :

Copy and paste the below R code directly into the swEhCharts.r

It must be the last entry in the #2 area of swEhCharts.r

### START ###

eName = "db%seq%read"

count0to1 = 6722450

count1to2 = 1003400

count2to4 = 269448

count4to8 = 628325

count8to16 = 786237

count16to32 = 81117

count32to64 = 7057

count64to128 = 1625

count128to256 = 916

count256to512 = 1143

count512to1024 = 2417

count1024to2048 = 1516

count2048to4096 = 29

count4096to8192 = 53

count8192to16384 = 0

count16384to32768 = 0

count32768to65536 = 0

count65536to131072 = 0

count131072to262144 = 0

...

The associated AWR report shows the average db file sequential read wait time is 2.8ms. And if you follow the instructions in the swEhCharts.r script and generate the related statistics, you will find the typical or median value is only 0.706ms. That's quite a difference.

But that's not why I'm writing this article.

Get Mad... Real Mad.

I'm writing this article because of the over 1500 db file sequential read times over 1 second. And the over 50 sequential reads over 4 seconds.

I would guess that 95% of Oracle DBAs would completely freak out if they knew over 1500 times their sequential reads were over 1000 ms and especially if they knew over 50 times the sequential reads were over 4 seconds! Even more so, when they learn this happened over a one hour window!

I suspect the DBA would storm over to the IO Administrator and start screaming that IOs are taking over 4 seconds! This situation will not end well for my brother DBA.

Run Away... Fast!

A smart IO Admin will say something like, "Let me see your numbers." They will put them into Excel and quickly determine that over 75% of the sequential reads are less than 1.4ms and 50% (median) of the sequential reads were less than 0.7ms. Now they are motivated!

With a little Excel magic, the IO Admin will tell you that over 80% of the IOs are less than 2ms and that over 90% of the IOs are less than 8ms.

Then with a fresh breath of superiority he will demonstrate, using your own data, that only 0.00086% of the IOs are greater than 1 second and, oh yeah, those 4 second IOs? Those occurred less than 0.00000% of the time! (That's not a typo, 0.00000%)

Sure, you could still ask him to check the IO cabling, but I wouldn't recommend it.

The Need For Perspective And Priority

No one likes four second single block reads. But for the sake of all the Oracle DBAs in the world, please don't take this data to your IO Admin! Take a breath and put this into perspective. I suspect there are much better performance improving opportunities out there for you to tackle.

The bottom line is this:

Concurrency happens and caching doesn't always happen.

Combine those and you can expect a few bad IO times.

All the best in your Oracle performance tuning work!

Craig.